Lateralization of the cerebral hemispheres

Introduction

Introduction

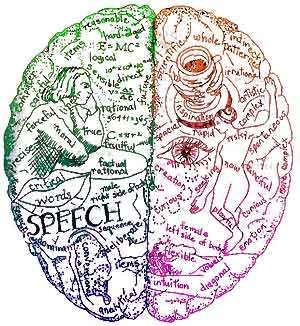

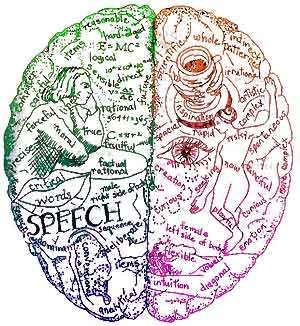

In grade school, I recall that the idea of being "left brained" or "right brained" was quite popular. Teachers would give us tests of our drawing or math ability to determine which half of our brain was "dominant." These days, the right is generally considered the creative and emotive half while the left is analytical and logical. These generalizations are somewhat out dated. As you will read, history has continually tried to assign concrete roles to the versatile, complex hemispheres of the brain. After talking a bit about the beginnings of our knowledge of hemispheric lateralization, I will go into some modern studies and theories about the two halves of the brain. This is a long one, so you might want to set some time aside or break it down into parts if you're reading on the fly. Enjoy!

A brief history of lateralization theories

Since the 1800's neurologists have been fascinated by the division of labor between the right and left hemispheres of the cerebral cortex. The specializations of each hemisphere have been repeatedly rewritten as new case studies and empirical data enter our understanding of cerebral lateralization.

It was 1861 when publication of Broca's breakthrough case study of a man with verbal expressive aphasia revealed the left hemisphere's dominance for language. The unfortunate gentleman had lost the ability to speak although he could still make noises and understand language. Post mortem autopsy revealed that the man had profound atrophy of the posterior portion of the second and third frontal gyri of his left hemisphere, now known as Broca's area. This area has been repeatedly confirmed as a key area involved in the motor functions necessary for speech production.

After Broca's breakthrough discovery, reports pooled in about the various disorders found in patients with left hemisphere lesions, including Wernicke's identification of receptive aphasia in 1874, Exner's identification of agraphia in 1881, and Lissauer's findings on agnosia in 1890 (Cutting, 9-12).

The left hemisphere continued to be viewed as the "verbal hemisphere" into the 1950's. As added support for the theory of left hemispheric verbal dominance, examination of epileptic patients by Miliner in 1958 found that patients undergoing a left temporal lobectomy suffered more significantly in recall of verbally spoken stories than those with removal of the right. The right was eventually dreamed the "spatial hemisphere;" Miliner's study of epileptic patients with lateralized temporal lobectomies being a large contributor to this theory. It seemed that those with the right temporal removed had difficulty identifying the scene in a picture but less difficulty with verbal recall than those who had left temporal lobectomies.

The 1960's brought new ideas regarding the distribution of labor between the hemispheres. Throughout the early 1900's it was common knowledge among physicians that the right hemisphere seemed to have a link with emotion, but the idea was not popularized until the 1960's. Again, patients of temporal lobectomies were the main influence for these generalizations. It seemed that those without the left temporal lobe tended to suffer depression while those lacking a right leaned toward euphoria or apathy. A tendency for patients with left hemisphere damage to suffer depression is still seen today (Cutting 24-26).

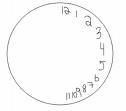

In the 1970's the verbal/nonverbal dichotomy lost much of its momentum as research began to show the important role of the right hemisphere in understanding metaphor and emotion in speech as well as its contribution to the fluency and tonality of language production. Instead a new dichotomy began to form in which the right hemisphere was seen as the "Gestalt" processor while the left was considered detail-oriented. This new theory mainly arose from studies of split brained patients--people who had had their corpus callosum (the connecting fibers between hemispheres) severed to control epilepsy. These patients were better able to reconstruct whole pictures (such as the circular face of a clock) with their left hand and visual field (controlled by the right hemisphere) and more able to focus on parts (such as the numbers on a clock) when working with their right hand and right visual field (controlled by the left hemisphere) (Cutting 21-23).

Today, the idea of hemispheric lateralization is so popular that terms like "left brained" and "right brained" are used (and often misused) colloquially. Although one cannot be literally left brained or right brained without sever damage or removal of a hemisphere, the terms are informally interpreted as how creative or analytical a person is. The right hemisphere is considered creative in the popular view, while the left hemisphere is known as the analytic half. There are, of course, faults to all of these extreme generalizations that have been made through the ages. Cutting notes the dilemma with this interpretation in his text, the Right Cerebral Hemisphere and Psychiatric Disorders:

"To call the left hemisphere analytic is heuristically unhelpful. It only provokes the questions--analytic of what? and how does it analyze?" -Cutting, 77

Sound and the hemispheres

To examine the known functions of the left and right cerebral hemispheres to date, let us begin with one of the first and most well known lateralizations: the distribution of verbal skills in the left and right hemispheres. Typically, the left hemisphere plays the chief role in speech production as it is the site of the Broca's and Wernicke's areas, but in a few people both hemisphere's play some role in speech production and in some left handed people the right hemisphere is dominant (containing both the Broca's and Wernicke's areas). For about ninety percent of the population though, the left hemisphere is dominant for speech (Cohen 32-33).

The idea of the left hemisphere as the verbal hemisphere arises from the many speech disorders that can be seen when portions of the left hemisphere are diseased or ablated. Verbal expressive aphasia (also known as Broca's aphasia), as mentioned earlier, is seen in patients with damage to the Broca's area. The disorder results in laborious, grammatically incorrect speech. The symptoms include difficulty with function words, such as "the," "a," "in," or "on" and slow, trying speech production. Patients are still able to produce content words, such as nouns, verbs, and adjectives. As part of the secondary motor cortex of the frontal lobe, these difficulties that result from Broca's aphasia are thought to arise from the inability to fall back upon the motor memories that the lips, tongue, and mouth rely upon for forming fluent speech.

Wernicke's aphasia is another startling condition that can arise from damage to the left hemisphere. Located in the auditory cortex of the left temporal lobe, the Wernicke's area and surrounding tissues are responsible for speech comprehension and the conversion of thoughts into meaningful speech. It is directly connected to the Broca's area by a series of axons creating a smooth connection between speech comprehension and production in unaffected people. When the Wernicke's area is damaged, fluent speech can still be produced due to the motor memory of the Broca's area, but content words are often replaced with nonsense (Carleson 482-495).

When the language areas of the left hemisphere are severely damaged, patients are sometimes able to recover lost verbal abilities in the corresponding areas of the right hemisphere. Mainly younger patients, especially those below the age of three, are able to make full recoveries (Habedank B, Haupt WF., Heiss WD., Herholz K., Kessler J., Thiel A, Winhuisen L).

The right hemisphere, too, plays a role in speech comprehension and production. It is essential for recognition of tone and the emotional expression in a speakers voice (Sander K, Scheich H). Many case studies show that lesions of the right cerebral hemisphere often result in a decreased ability to detect the emotion in another’s words. Prosody, the rhythm, emphasis, and melody of speech, is also a key role of the right hemisphere (Carlson 499-501, Young 53-54). Although speech can still be understood by those lacking the Broca's and Wernicke's areas of the left hemisphere, the right hemisphere cannot produce speech on its own in most people.

Even in the absence of actual language, the auditory processing of the hemispheres has a fundamental lateralization dependent upon the type of auditory stimulus provided. According to fMRI studies, the brain responds to increased temporal variation in sound with more dominance in the left Heschl's gyrus, while an increase in spectral variation in sound shows more activity in the right superior temporal gyrus and right posterior superior temporal sulcus (Bishop DV, Jamison HL, Matthews PM., Watkins KE).

Functional MRI studies of patients exposed to music too show lateralization. The right hemisphere seems to be more involved in interpretation of music than the left. Again, we see the right hemisphere playing a larger role in deciphering tones and rhythm. Professional musicians, on the other hand, showed a stronger left hemispheric lateralization when listening to music. It is theorized that this may be due to their analytic interpretation of the music; they already have an orderly categorical system by which to interpret the sound whereas novices do not (Huang, Chen, Wang, and Chung 187).

Visual perception in the hemispheres

It is especially difficult to define the roles of the hemispheres in visual spatial representation due to a lack of terminology and definition for different types of visual spatial abilities. Although it is fairly clear that there is a difference in the ways the hemispheres process visual spatial information, where the line is drawn as to which hemisphere does what seems to be very difficult to categorize.

Beginning with the basics, there does not seem to be a large difference in the ability of either hemisphere to detect a visual stimulus in its visual field. Several studies in the sixties and seventies including Filbey and Gazzaniga in 1960, Bryden in 1976, and Jeeves and Dixon in 1970, reported that both patients with left hemisphere damage and those with right did not have difficulty detecting light dots or other visual stimulus in their intact visual fields. A hemispheric difference in perception of visual stimulus seems first be noticed when defining the orientation of a seen object. Multiple studies show that the right hemisphere holds a superior ability to distinguish a line’s orientation to the horizontal or vertical axis (Young 8-12).

Once we move on from presenting patients with basic shapes to entire pictures new distinctions between hemispheric structures can be identified. Visual-object agnosia, caused by lesions of the left parieto-occipital region, results in an inability to identify the category to which an object belongs. Patients are still able to identify isolated elements and whole scenes, indicating that the left hemisphere is essential for the categorization of objects (Cutting 13-14, 76).

Again, much research seemed to point to the left hemisphere playing a role in identifying parts while the right perceived objects as wholes. The idea that the left hemisphere is necessary for perceiving parts creates a dilemma; how deeply can we consider an object or scene a whole or in parts? For example, I could say the forest is the whole and the trees the parts, or the tree is the whole and the branches are the parts, or the branches are the whole and the cells are the parts. At which point does the left hemisphere become responsible for identifying the pieces of the whole and the right for putting the whole together? It seems the theory that left perceives parts while the right perceives wholes is too general. A better way to describe the role of the left hemisphere might be to say that it provides the formal relationships between objects as they fall into categories and subcategories (e.g. to say that a Dodge Spirit falls into the "car" category) while the right hemisphere considers how these representations reflect the order of the actual world.

An interesting theory of how the left and right hemispheres cooperate in object recognition comes from Warrington and Taylors' 1978 experiments. They noted that the first stage in object recognition is perceptual categorization by its physical nature, in which the right hemisphere collates all possible spatial configurations of an object so that it can be perceived from any visual angle. Next, the left hemisphere adds a semantic category to the object (again supporting the idea that the left is the hemisphere of language). Her support for this theory comes from studies in which patients with right hemisphere lesions consistently have difficulty matching same objects presented from odd angles (Cutting 75-76).

Along these lines a later theory by Kosslyn in the late 80's suggested that the left and right hemispheres worked independently rather than in the serial fashion proposed by Warrington. The right could encode shapes from multiple perspectives, he suggested, while the left funneled a range of shapes into a single, symbolic representation that could be easily named (Cutting 77-80).

In addition to superiority in recognition of three dimensional objects at presented at odd angles, the right hemisphere also seems to be dominant in its ability to recognize facial expressions. This could be said to be a part of its overall excellence in Gestalt reasoning, but also might be attributed to the right hemisphere's regard as the "emotional hemisphere." (Cutting 26).

Unilateral neglect

In patients with grandiose damage to either the left or right hemisphere, paralysis of the opposite side of the body may result. Interestingly, a defense mechanism known as unilateral neglect is typically only seen in those with lesions of the right cerebral hemisphere with corresponding paralysis of the left side of the body (Cutting 36). Patients with unilateral neglect completely ignore their entire left visual field as if it did not exist. For example, they may draw a clock with only numbers on the right side, or when asked to mark where the middle of a line is they may make a mark far to the right of the middle.

As described in Oliver Sack's case studies, sometimes patients are able to understand their situation on an intellectual level, yet still have difficulty resolving the problem. One of Sack's patients, Mrs. S, suffered a massive stoke effecting the posterior regions of her right cerebral hemisphere. The result was unilateral neglect of her left visual field but after having her situation explained to her she was able to combat the obstacle by turning almost full circle to the right to recover the missing half of her meals or papers, although she was still unable to grasp the concept of turning left, as left simply did not exist to her (Sacks 77-79).

Another more extreme symptom of a lesion to the right hemisphere can be full blown denial. Patients with denial not only neglect their left visual field, but also deny the fact that they are paralyzed. In his case studies, V.S. Ramachandran describes some patients who would go out of their way to deny their paralysis. One patient, when asked of her paralyzed left hand "whose arm is this?" responded that it must be her brother's, not her own, because it appeared to be rather hairy (Ramachandran 127-133).

When damage spreads as far as ventromedial frontal lobe of the right hemisphere, denial often expands beyond the patient's paralysis and body image into other regions as well. Patients may deny any out of the ordinary behavior or situation from eating candy to the existence of a diagnosed brain tumor whereas no such problems were noted in patients with corresponding lesion in the left hemisphere (Ramachandran 142-143).

Tying it all together

A fascinating theory of lateralization that takes much of the to-date research into account comes from V.S. Ramachandran in his novel, Phantoms in the Brain. The function of the left hemisphere, Ramachandran writes, is primarily the preservation of stability. This may be why we see cases of denial and neglect in patients with right hemispheric legions but not in those with left as their left hemisphere strives to maintain the status quo. This also explains the euphoric or apathetic nature of those with right hemisphere damage; if they cannot perceive that something is wrong then they do not worry about the problem. The right hemisphere, on the other hand, is charged with assimilating novel information. Again, we see that it must take a wholesale approach instead of categorizing items into preexisting schemes. Take again, for example, visual perception. The right hemisphere must be able to recognize an object from a new angle, at this point taking in the novel information. The left hemisphere is then able to categorize this information, sorting it into the filing system of the mind. As Ramachandran jokingly suggests,

"The right hemisphere is a left-wing revolutionary generating paradigm shifts, whereas the left hemisphere is a die hard conservative that clings to the status quo"-Ramachandran, 136

In conclusion, it seems that not all is yet known about the distribution of labor between the right and left cerebral hemispheres. In the majority of the population, it appears that the left hemisphere is a place for systemic categorization, such as the parts of language and music that can be organized and the things in life which can be readily cataloged. The right hemisphere is more elusive, dealing in emotions and abstractions; it helps us to take in novel information that we do not yet have a name for.

Sources

Bishop DV, Jamison HL, Matthews PM., Watkins KE. "Hemispheric Specialization for Processing Auditory Nonspeech Stimuli." Cerebral Cortex. 10 (2005): 1093

Carlson, Neil R. Physiology of Behavior, Eighth Edition. New York: Pearson Education, Inc. 2004.

Cohen, David. The Secret Language of the Mind. San Fancisco: Chronicle Books, 1996.

Cutting, John. The Right Cerebral Hemisphere and Psychiatric Disorders. New York: Oxford Press, 1990.

Habedank B, Haupt WF., Heiss WD., Herholz K., Kessler J., Thiel A, Winhuisen L. "From the left to the right: How the brain compensates progressive loss of language function." Brain Language. (2006)

Ramachandran, V.S. and Blakeslee, Sandra. Phantoms in the Brain. New York: William and Marrow Company, Inc. 1998.

Sacks, Oliver. The Man Who Mistook His Wife for a Hat and Other Clinical Tales. New York: Touchstone, 1998.

Sander K, Scheich H. "Left auditory cortex and amygdala, but right insula dominance for human laughing and crying." Journal of Cognitive Neuroscience. 17.10 (2005): 1519

Wang, Huang, Chen, and Chung. "Monitoring Music Processing of Harmonic Chords using fMRI: Comparison between Professional Musicians and Amateurs." International Society for Magnetic Resonance in Medicine (2006): 187

Young, Andrew W. Functions of the Right Cerebral Hemisphere. London: Academic Press, 1983.

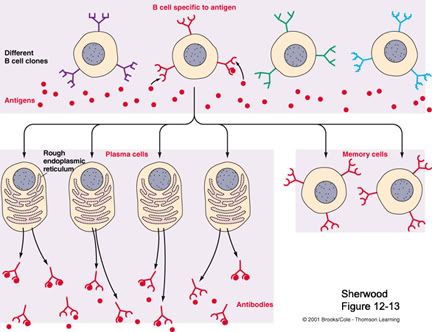

The purpose of vaccination is to help the adaptive branch of the immune system remember specific pathogens so that it can respond to them more quickly and efficiently during subsequent exposures. Many people understand that once they get a disease like chicken pox once, they are unlikely to get that disease (and certainly not with the same severity as before) again. The same idea is at play with vaccination, where an individual is exposed to an innocuous part of the pathogen (like viral coat proteins or bacteria cell wall) to create an immune system memory.

The purpose of vaccination is to help the adaptive branch of the immune system remember specific pathogens so that it can respond to them more quickly and efficiently during subsequent exposures. Many people understand that once they get a disease like chicken pox once, they are unlikely to get that disease (and certainly not with the same severity as before) again. The same idea is at play with vaccination, where an individual is exposed to an innocuous part of the pathogen (like viral coat proteins or bacteria cell wall) to create an immune system memory.

Pit bulls (American Staffordshire Terriers) have caught the media's attention recently after the disbandment of a pit bull fighting ring a sports star was involved in. The media's eye continues to focus on this breed, especially with regards to dog bites and mauling.

Pit bulls (American Staffordshire Terriers) have caught the media's attention recently after the disbandment of a pit bull fighting ring a sports star was involved in. The media's eye continues to focus on this breed, especially with regards to dog bites and mauling. By what I've seen of the American Staffordshire Terrier, both at the local shelter and in my own home (my mutt is predominantly pit bull and heeler), I'd say this breed has great potential and it would be a tragedy to discontinue their heritage. Breeders who focus on the strengths of the breed are abound, and I feel it has a great future as one of America's favorite family breeds.

By what I've seen of the American Staffordshire Terrier, both at the local shelter and in my own home (my mutt is predominantly pit bull and heeler), I'd say this breed has great potential and it would be a tragedy to discontinue their heritage. Breeders who focus on the strengths of the breed are abound, and I feel it has a great future as one of America's favorite family breeds.

It is not just the entertaining quarks of dogs which have been encouraged through breeding though, but some of the most irritating characteristics as well. Terriers, for example, are small dogs bred to hunt vermin. Some had the job of digging underground to find the rats or prairie dogs it hunted. The dog would often get stuck in the tunnel, and would bark vigorously to inform its master of its whereabouts and the location of the prey (Coren, 2000). The master would then dig up the dog and vermin. Although now considered irritating, the constant digging and excited barking of terriers was at one time considered useful and selected for.

It is not just the entertaining quarks of dogs which have been encouraged through breeding though, but some of the most irritating characteristics as well. Terriers, for example, are small dogs bred to hunt vermin. Some had the job of digging underground to find the rats or prairie dogs it hunted. The dog would often get stuck in the tunnel, and would bark vigorously to inform its master of its whereabouts and the location of the prey (Coren, 2000). The master would then dig up the dog and vermin. Although now considered irritating, the constant digging and excited barking of terriers was at one time considered useful and selected for.  As another example, in Dalmatians the instance of deafness is about 30% (50% in blue eyed phenotypes). There is a lower instance of deafness in Dalmatians showing splotchy ear patches due to the close linkage of the two traits on the dog chromosome, but because this characteristic is not seen as favorable in most kennel clubs, dogs displaying it are not selected for breeding. Even more alarming, all Dalmatians are homozygous recessive for a defective autosomal allele which affects purine metabolism leading to hyperuricosuria (causes kidney stones). This gene is tightly linked to the characteristic coat spotting pattern of the Dalmatians and so has not been eliminated.

As another example, in Dalmatians the instance of deafness is about 30% (50% in blue eyed phenotypes). There is a lower instance of deafness in Dalmatians showing splotchy ear patches due to the close linkage of the two traits on the dog chromosome, but because this characteristic is not seen as favorable in most kennel clubs, dogs displaying it are not selected for breeding. Even more alarming, all Dalmatians are homozygous recessive for a defective autosomal allele which affects purine metabolism leading to hyperuricosuria (causes kidney stones). This gene is tightly linked to the characteristic coat spotting pattern of the Dalmatians and so has not been eliminated. It is not just breeders and kennel clubs that create problems for dogs though. People often select their new family puppy based upon an appearance that they find appealing. I've been working at the local shelter for a while now and the most common breeds I see coming in are Labrador, husky, pit bull, and border collie mixes. It is likely that the former owners of these dogs took them home without researching the breed first. They might have purchased a husky imagining the rugged appearance it would give them, only to later realize that the dog requires a rugged lifestyle as well and does not find channel surfing an adequate exercise. Maybe they thought border collies were cute and trim but didn't understand that this energetic, intelligent breed often requires a lot of space and a job otherwise it may find other ways to occupy its active mind and body--such as tearing the couch to shreds. Others may have purchased what they hoped would be a fearsome guard dog pit bull only to realize they didn't have time to train it properly.

It is not just breeders and kennel clubs that create problems for dogs though. People often select their new family puppy based upon an appearance that they find appealing. I've been working at the local shelter for a while now and the most common breeds I see coming in are Labrador, husky, pit bull, and border collie mixes. It is likely that the former owners of these dogs took them home without researching the breed first. They might have purchased a husky imagining the rugged appearance it would give them, only to later realize that the dog requires a rugged lifestyle as well and does not find channel surfing an adequate exercise. Maybe they thought border collies were cute and trim but didn't understand that this energetic, intelligent breed often requires a lot of space and a job otherwise it may find other ways to occupy its active mind and body--such as tearing the couch to shreds. Others may have purchased what they hoped would be a fearsome guard dog pit bull only to realize they didn't have time to train it properly.

In the 1970's the verbal/nonverbal dichotomy lost much of its momentum as research began to show the important role of the right hemisphere in understanding metaphor and emotion in speech as well as its contribution to the fluency and tonality of language production. Instead a new dichotomy began to form in which the right hemisphere was seen as the "Gestalt" processor while the left was considered detail-oriented. This new theory mainly arose from studies of split brained patients--people who had had their corpus callosum (the connecting fibers between hemispheres) severed to control epilepsy. These patients were better able to reconstruct whole pictures (such as the circular face of a clock) with their left hand and visual field (controlled by the right hemisphere) and more able to focus on parts (such as the numbers on a clock) when working with their right hand and right visual field (controlled by the left hemisphere) (Cutting 21-23).

In the 1970's the verbal/nonverbal dichotomy lost much of its momentum as research began to show the important role of the right hemisphere in understanding metaphor and emotion in speech as well as its contribution to the fluency and tonality of language production. Instead a new dichotomy began to form in which the right hemisphere was seen as the "Gestalt" processor while the left was considered detail-oriented. This new theory mainly arose from studies of split brained patients--people who had had their corpus callosum (the connecting fibers between hemispheres) severed to control epilepsy. These patients were better able to reconstruct whole pictures (such as the circular face of a clock) with their left hand and visual field (controlled by the right hemisphere) and more able to focus on parts (such as the numbers on a clock) when working with their right hand and right visual field (controlled by the left hemisphere) (Cutting 21-23). The idea of the left hemisphere as the verbal hemisphere arises from the many speech disorders that can be seen when portions of the left hemisphere are diseased or ablated. Verbal expressive aphasia (also known as Broca's aphasia), as mentioned earlier, is seen in patients with damage to the Broca's area. The disorder results in laborious, grammatically incorrect speech. The symptoms include difficulty with function words, such as "the," "a," "in," or "on" and slow, trying speech production. Patients are still able to produce content words, such as nouns, verbs, and adjectives. As part of the secondary motor cortex of the frontal lobe, these difficulties that result from Broca's aphasia are thought to arise from the inability to fall back upon the motor memories that the lips, tongue, and mouth rely upon for forming fluent speech.

The idea of the left hemisphere as the verbal hemisphere arises from the many speech disorders that can be seen when portions of the left hemisphere are diseased or ablated. Verbal expressive aphasia (also known as Broca's aphasia), as mentioned earlier, is seen in patients with damage to the Broca's area. The disorder results in laborious, grammatically incorrect speech. The symptoms include difficulty with function words, such as "the," "a," "in," or "on" and slow, trying speech production. Patients are still able to produce content words, such as nouns, verbs, and adjectives. As part of the secondary motor cortex of the frontal lobe, these difficulties that result from Broca's aphasia are thought to arise from the inability to fall back upon the motor memories that the lips, tongue, and mouth rely upon for forming fluent speech. In patients with grandiose damage to either the left or right hemisphere, paralysis of the opposite side of the body may result. Interestingly, a defense mechanism known as unilateral neglect is typically only seen in those with lesions of the right cerebral hemisphere with corresponding paralysis of the left side of the body (Cutting 36). Patients with unilateral neglect completely ignore their entire left visual field as if it did not exist. For example, they may draw a clock with only numbers on the right side, or when asked to mark where the middle of a line is they may make a mark far to the right of the middle.

In patients with grandiose damage to either the left or right hemisphere, paralysis of the opposite side of the body may result. Interestingly, a defense mechanism known as unilateral neglect is typically only seen in those with lesions of the right cerebral hemisphere with corresponding paralysis of the left side of the body (Cutting 36). Patients with unilateral neglect completely ignore their entire left visual field as if it did not exist. For example, they may draw a clock with only numbers on the right side, or when asked to mark where the middle of a line is they may make a mark far to the right of the middle. A fascinating theory of lateralization that takes much of the to-date research into account comes from V.S. Ramachandran in his novel, Phantoms in the Brain. The function of the left hemisphere, Ramachandran writes, is primarily the preservation of stability. This may be why we see cases of denial and neglect in patients with right hemispheric legions but not in those with left as their left hemisphere strives to maintain the status quo. This also explains the euphoric or apathetic nature of those with right hemisphere damage; if they cannot perceive that something is wrong then they do not worry about the problem. The right hemisphere, on the other hand, is charged with assimilating novel information. Again, we see that it must take a wholesale approach instead of categorizing items into preexisting schemes. Take again, for example, visual perception. The right hemisphere must be able to recognize an object from a new angle, at this point taking in the novel information. The left hemisphere is then able to categorize this information, sorting it into the filing system of the mind. As Ramachandran jokingly suggests,

A fascinating theory of lateralization that takes much of the to-date research into account comes from V.S. Ramachandran in his novel, Phantoms in the Brain. The function of the left hemisphere, Ramachandran writes, is primarily the preservation of stability. This may be why we see cases of denial and neglect in patients with right hemispheric legions but not in those with left as their left hemisphere strives to maintain the status quo. This also explains the euphoric or apathetic nature of those with right hemisphere damage; if they cannot perceive that something is wrong then they do not worry about the problem. The right hemisphere, on the other hand, is charged with assimilating novel information. Again, we see that it must take a wholesale approach instead of categorizing items into preexisting schemes. Take again, for example, visual perception. The right hemisphere must be able to recognize an object from a new angle, at this point taking in the novel information. The left hemisphere is then able to categorize this information, sorting it into the filing system of the mind. As Ramachandran jokingly suggests, Too suggest that one's character and drive reside in mere cortical connections, too reduce a feeling as grand and consuming as love to chemicals and synapses, is insulting to us as human beings because it seems to demystify the concepts. Rene Descartes (at right) thought the physical body was connected to the soul via the pineal gland. The "mind" and "body" were two distinct components of a person. In the book Descartes' Error (which will be used extensively throughout this essay), Antonio Damasio suggests that the mind is not "reduced" when it is put in the context of the brain. We only consider it insulting because we do not fully understand the wonder, plasticity, and complexity of the brain. This distinction between mind and brain, in some cases, can even be damaging. Individuals with neurologically driven mental illnesses are still condemned in the public eye instead of given the compassion one might give to an individual with a disorder of any other organ like the heart or kidney. Demasio (1994) wrote of the mind-brain distinction:

Too suggest that one's character and drive reside in mere cortical connections, too reduce a feeling as grand and consuming as love to chemicals and synapses, is insulting to us as human beings because it seems to demystify the concepts. Rene Descartes (at right) thought the physical body was connected to the soul via the pineal gland. The "mind" and "body" were two distinct components of a person. In the book Descartes' Error (which will be used extensively throughout this essay), Antonio Damasio suggests that the mind is not "reduced" when it is put in the context of the brain. We only consider it insulting because we do not fully understand the wonder, plasticity, and complexity of the brain. This distinction between mind and brain, in some cases, can even be damaging. Individuals with neurologically driven mental illnesses are still condemned in the public eye instead of given the compassion one might give to an individual with a disorder of any other organ like the heart or kidney. Demasio (1994) wrote of the mind-brain distinction: This individual displays symptoms of a psychological disorder of character called the "Phinease Gage Matrix" (named after the first documented case study whose skull is at left). This so called psychological disorder has true neurological roots. When damage is done directly to the ventromedial sector of prefrontal cortex, this remarkable change in character is observed. There exists a myriad of case studies to support this claim, thanks in part to the cruel practice of prefrontal leucotomy, which was a common place cure for severe anxiety in the 1940s. The practice would cure the anxiety, but left patients with an altered personality often likened to "the mind of a child." This analogy is not inaccurate, as the prefrontal cortex is the last to develop in humans and is usually not complete until the child reaches its teens (Gogtay et al., 2004). Emotional defect and decision making defect often go hand in hand with damage to the ventromedial prefrontal cortex. Damasio notes that a "...reduction in emotion may constitute an equally important source of irrational behavior" (Damasio, 1994, p. 53). A certain degree of anxiety, it seems, is necessary to keep character in check.

This individual displays symptoms of a psychological disorder of character called the "Phinease Gage Matrix" (named after the first documented case study whose skull is at left). This so called psychological disorder has true neurological roots. When damage is done directly to the ventromedial sector of prefrontal cortex, this remarkable change in character is observed. There exists a myriad of case studies to support this claim, thanks in part to the cruel practice of prefrontal leucotomy, which was a common place cure for severe anxiety in the 1940s. The practice would cure the anxiety, but left patients with an altered personality often likened to "the mind of a child." This analogy is not inaccurate, as the prefrontal cortex is the last to develop in humans and is usually not complete until the child reaches its teens (Gogtay et al., 2004). Emotional defect and decision making defect often go hand in hand with damage to the ventromedial prefrontal cortex. Damasio notes that a "...reduction in emotion may constitute an equally important source of irrational behavior" (Damasio, 1994, p. 53). A certain degree of anxiety, it seems, is necessary to keep character in check.